Runs close to the operator

Credentials, kubeconfig contexts, and bring-your-own model keys stay in the local runtime that already has access to the environment.

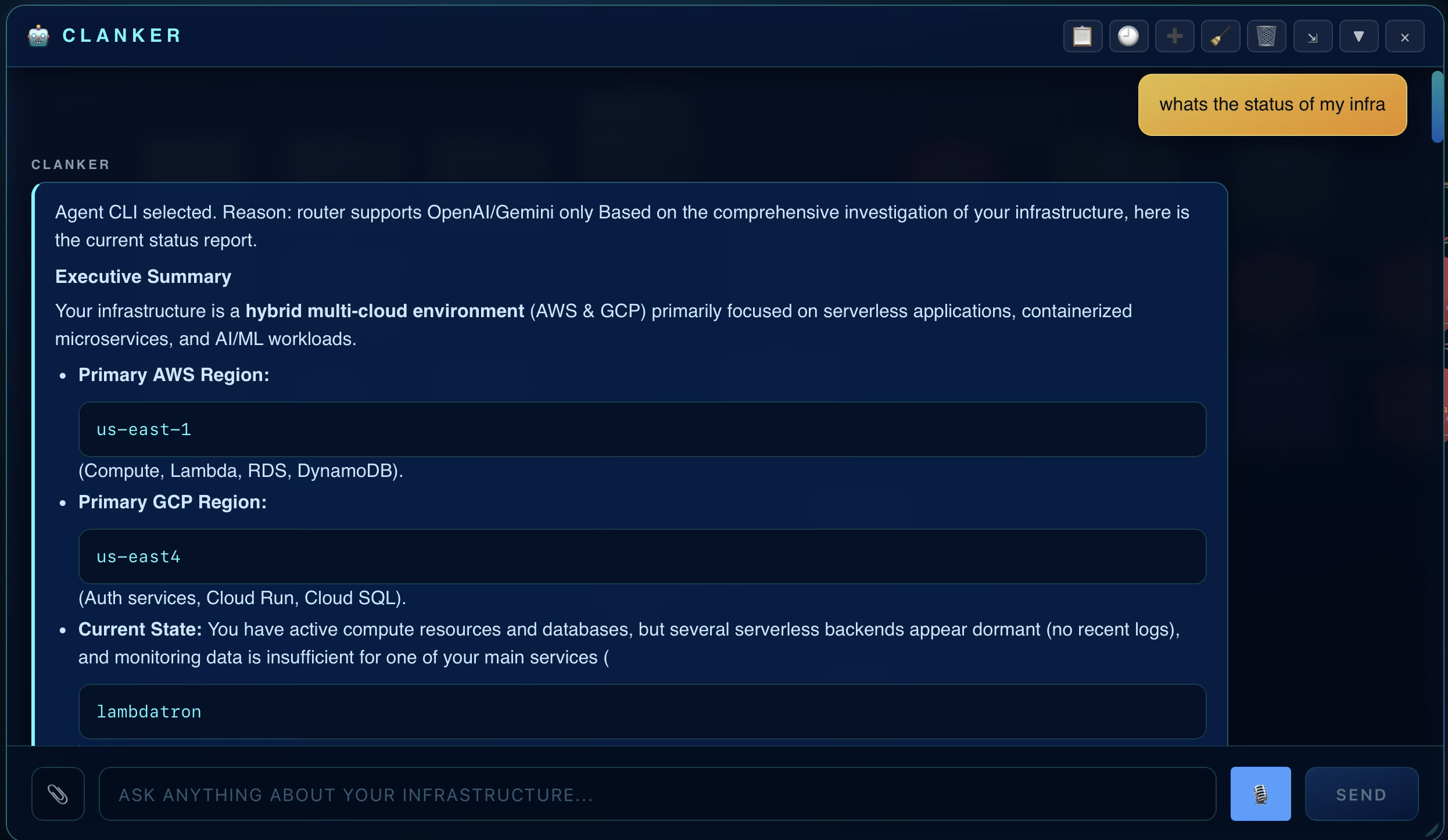

Local-first AI DevOps is an operating model for infrastructure work where live context, AI reasoning, and operator approvals stay close to the machine already trusted to talk to cloud and cluster APIs.

It is not a new observability backend, pager system, or IaC orchestrator. It is the context and action workspace that sits between those systems and the operator, using existing access patterns rather than asking teams to re-home privileged credentials in another hosted layer.

Clanker Cloud is not a full observability backend; it is a local-first infra context and action workspace.

Credentials, kubeconfig contexts, and bring-your-own model keys stay in the local runtime that already has access to the environment.

The category is about answering questions and planning actions from real cloud, Kubernetes, GitHub, Vercel, and edge context instead of generic chat memory.

Local-first AI DevOps works with observability, incident, cost, and delivery tools rather than pretending one app replaces all of them.

Clanker Cloud is the practical implementation here: local runtime, reviewed plans, multi-provider context, and explicit approval before change.

The current product positioning covers cloud providers, Kubernetes, GitHub, and bring-your-own AI keys from one local operating surface.

| Layer | What it does | What it is not |

|---|---|---|

| Observability backend | Stores and queries metrics, logs, traces, dashboards, and alert history | Not the same as a local-first context workspace |

| Incident platform | Routes pages, schedules responders, and manages escalation paths | Not the same as evidence gathering and reviewed action planning |

| Infrastructure context and action workspace | Queries live provider state, compares options, drafts plans, and keeps approvals close to the operator | Not blind automation or a hosted privileged agent |

| AI runtime path | Uses local-first or BYOK model access for reasoning against live evidence | Not a vendor-owned markup layer for every model call |

This is the compact architecture pattern behind the category.

Use the cloud credentials, kubeconfigs, repos, and AI keys already trusted on the operator machine.

Pull provider state, topology, cost, logs, and change context from the connected systems.

Ask questions or compare options using evidence-backed AI instead of free-floating chat output.

Translate the next step into a reviewed plan instead of jumping straight from alarm to apply.

Keep change approval with the operator rather than hiding it inside a hosted black box.

Validate outcome against the same live infrastructure context after the action runs.

| Dimension | Local-first AI DevOps | Hosted AI DevOps |

|---|---|---|

| Credential custody | Operator machine and existing local access patterns | Usually adds a hosted vendor trust boundary |

| AI API path | Can route directly from the local runtime to the chosen model provider | Typically transits a hosted vendor service |

| Grounding | Built around live provider and cluster state gathered locally | Often strongest where the vendor already owns the primary workflow |

| Pricing control | BYOK keeps model-provider choice and spend visible | Often bundles or resells model usage inside the product |

| Operator control | Reviewed plans and explicit approvals can stay close to the operator | Convenience often depends on more central orchestration |

| Best fit | Teams that care about custody, evidence, and explicit control | Teams that want vendor-managed convenience and accept the hosted boundary |

| Tool class | Best at | What still stays outside it | How local-first AI DevOps fits |

|---|---|---|---|

| Datadog or Dynatrace | Observability backends and telemetry analytics | Action planning and local credential custody | Adds grounded context and reviewed actions around existing telemetry |

| PagerDuty | On-call, escalation, and incident routing | Cross-provider evidence gathering and change review | Moves from alarm to investigation and plan in one workspace |

| Kubecost | Kubernetes cost allocation and FinOps visibility | Broader runtime and change context outside cost analysis | Keeps cost signals next to topology, incidents, and next actions |

| Spacelift | IaC orchestration, policies, and runners | Ad hoc investigation and multi-tool context gathering | Helps operators inspect, ask, compare, and review before execution |

| Portainer | Container and cluster management UI | Multi-cloud context and AI-assisted cross-system investigation | Broadens from cluster surface to cloud, repo, and cost context |

| AWS-native DevOps agent | Vendor-native AWS assistance | Multi-cloud coverage and local-first trust boundary | Fits teams that want provider-agnostic context and local custody |

The current support surface includes AWS, GCP, Azure, Tencent Cloud, Kubernetes, Cloudflare, Hetzner, DigitalOcean, Vercel, GitHub, and bring-your-own AI provider keys.

Upcoming support is planned for Ansible-driven environments and Slurm-based compute workflows. They are not positioned as current GA coverage on this page yet.

Teams still need systems like Datadog or Dynatrace if they rely on long-term metrics, traces, logs, APM, or RUM as a primary backend.

Teams still need PagerDuty or an equivalent system if they depend on schedules, escalations, stakeholder notifications, and major-incident workflows.

Teams still keep tools like Spacelift when the primary problem is remote Terraform or OpenTofu orchestration across many stacks and policy gates.

An alert fires, the operator gathers provider state, checks topology and recent changes, then reviews a suggested action without bouncing across five consoles.

Cost data is easier to act on when the same workspace already shows the workloads, clusters, repos, and recent changes connected to the spend increase.

Instead of guessing from raw IaC diffs alone, teams compare the planned change against live dependencies and current runtime context first.

The local MCP path means agent workflows can use the same trusted context the operator sees instead of inventing their own partial view.

The category is easier to understand when the operating surface is visible.

Operators ask what changed, what failed, and what to do next from one local-first surface.

Topology becomes part of the same investigation loop instead of living in a separate diagramming tool.

The local-first value is strongest when the operator can inspect intent before any create, modify, or destroy step runs.

These are short product demos tied to the same local-first operating model described on this page.

Parallel scan across connected infrastructure with evidence-backed findings.

Security findings tied back to the same local-first infrastructure workspace.

Founder at Clanker Cloud. Public contact for privacy, procurement, and product review requests at bogdan@novlabs.ai.

Founder at Clanker Cloud and public beta contact listed on the account page for direct questions during beta.

The desktop app is built on the public Clanker CLI, so the core engine and command surface are inspectable on GitHub.

The docs explain installation, provider setup, and the local MCP command surface instead of relying on generic marketing claims.

The security page documents local credential custody, bring-your-own AI keys, and reviewed-plan execution in concrete terms.

Added the canonical local-first AI DevOps page plus high-intent comparisons for observability, incident response, cost, IaC, container ops, and AWS-native agent workflows.

App-intent pages now carry consistent operating-system, pricing, docs, GitHub, and supported-platform structured data.

llms.txt, llms.json, and sitemap entries now expose the new category and comparison pages directly.

The current beta exposes desktop downloads through the account and downloads flow.

Agents can reach the running app over localhost, and teams can choose their own model provider or local inference endpoint.

The current beta includes live demos and pages covering multi-provider investigation, security scanning, and reviewed execution plans.

The current site and support surface now include Vercel in the live provider set, while Ansible and Slurm are called out as upcoming support rather than current coverage.

Telemetry backend versus local-first context and action workspace.

Enterprise observability and AIOps backend versus local-first operator workflow.

On-call routing versus live investigation and reviewed next actions.

Kubernetes cost depth versus broader runtime and change context.

Fleet-scale IaC orchestration versus local-first context and plan review.

Container admin surface versus multi-cloud context workspace.

Vendor-native AWS assistance versus provider-agnostic local-first operations.

Direct architectural tradeoffs between hosted and local operating models.

No. Observability backends store telemetry. Local-first AI DevOps is the workspace that gathers live context from those systems and adjacent provider surfaces, then helps the operator investigate, compare options, and approve actions.

No. The point is local custody and local routing of privileged access, not disconnecting from cloud APIs or chosen AI providers.

Clanker Cloud is the practical implementation described on this page: a local-first desktop workspace for infrastructure context, reviewed plans, and explicit operator-approved actions.

The current positioning includes AWS, GCP, Azure, Tencent Cloud, Kubernetes, Cloudflare, Hetzner, DigitalOcean, Vercel, GitHub, and bring-your-own AI provider keys. Ansible and Slurm are planned next and are not presented as current support on this page.

Use the product definition and architecture pages when you want the category translated into the current Clanker Cloud workflow.